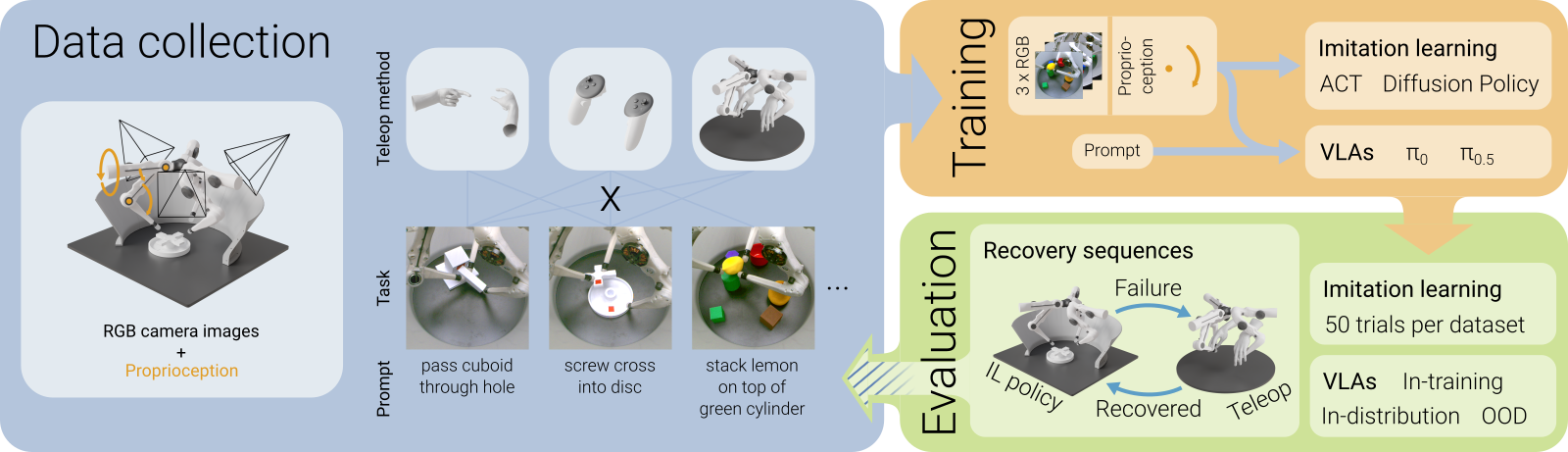

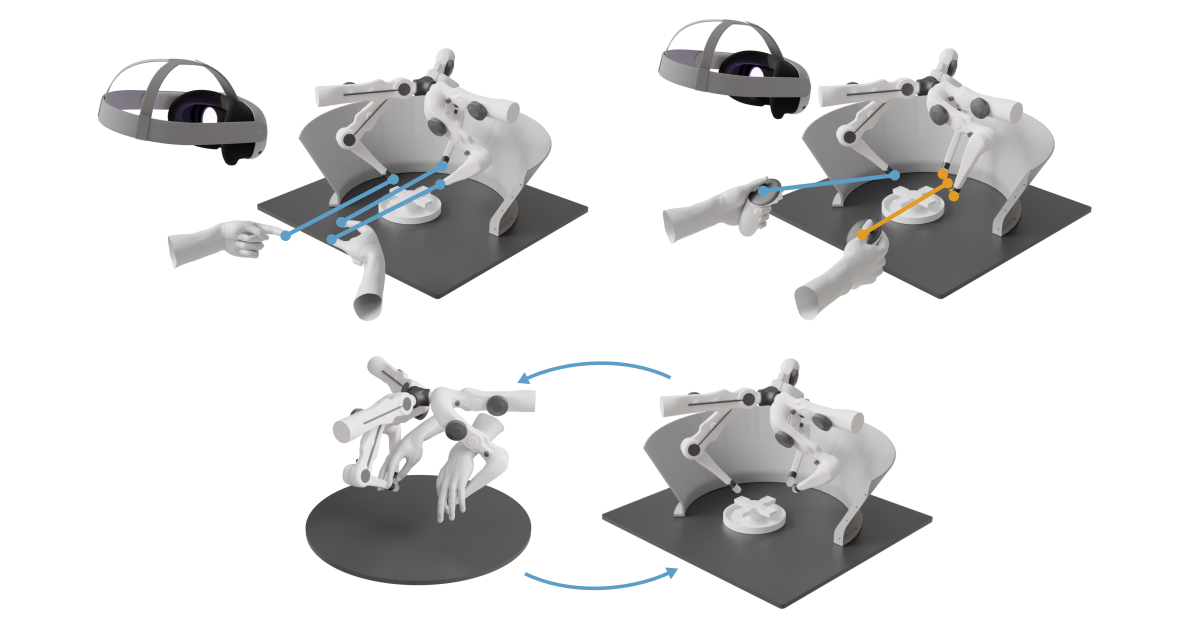

Humans have proven to be powerful teachers for robot manipulation skills via imitation learning. How can we leverage this potential for robots with a morphology unlike our own? In this work, we demonstrate that teleoperation of a three-fingered robot morphology is both feasible and effective for dexterous manipulation tasks. To address the challenges posed by the embodiment gap between human demonstrators and non-humanoid robots, we investigate three teleoperation strategies: fingertip matching using hand tracking from a commercial AR headset, control via motion controllers, and kinesthetic teaching with a leader robot. We collect demonstrations on a suite of dexterous manipulation tasks, including assembling a 3D-printed object and folding a napkin. We then train manipulation policies with ACT and Diffusion Policy and evaluate their success on the respective tasks. The policies trained on data collected via motion controllers and kinesthetic teaching generally outperform those trained on hand-tracking data. We additionally fine-tune vision-language-action models on pick-and-place data collected with the TriFinger robot. The resulting policies achieve high success rates for in-distribution tasks and can generalize to objects not seen during fine-tuning, demonstrating that large-scale pretraining can be leveraged for this non-standard embodiment. We release open-source datasets and policy checkpoints to support further research in non-anthropomorphic dexterous manipulation.